Energy Detective Building Energy Forecasting Competition

第一届"能耗侦探"建筑能耗预测竞赛

Student lead for competition operations, dataset curation, scoring infrastructure, and post-competition research analysis.

📖 Project Overview

In 2020, I served as the student lead for organizing the first "Energy Detective" building energy forecasting competition. The competition was centered on a research-driven challenge: predicting the energy consumption of a new building with no historical energy data, using only limited physical description and data from 20 reference buildings.

Beyond organizing the logistics, my role was end-to-end. I built the scoring infrastructure, curated the dataset, managed communications with 195 participants across 7 countries, led the award ceremony, and contributed to the post-competition result analysis that became a conference presentation and an SCI journal paper.

Dynamic leaderboard — submission progression during the competition

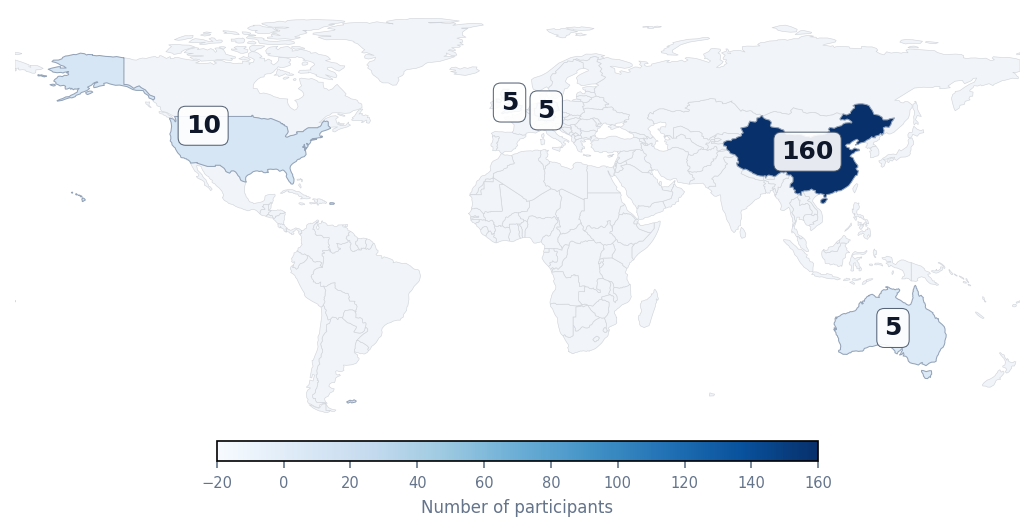

🌏 Who Participated

The competition attracted 195 participants from universities, research institutes, and industry companies across 7 countries and regions, including mainland China, Singapore, Germany, the United Kingdom, the United States, Australia, and Hong Kong.

Participating Institutions

Word cloud (registration data)

World Distribution

(195 participants · 7 regions)

💡 Why It Matters

Rather than working on a model in isolation, we translated a meaningful research problem, limited-data building energy prediction, into a structured, reproducible competition task that dozens of teams could engage with simultaneously.

The task was fully blind: no historical energy data for the target building, only limited physical specs. This is much closer to real engineering practice than leaderboard-style benchmark datasets.

The post-competition analysis revealed actionable findings: summer HVAC load is more predictable than winter; feature engineering remains the key bottleneck; and white-box/black-box hybrid methods are a promising direction.

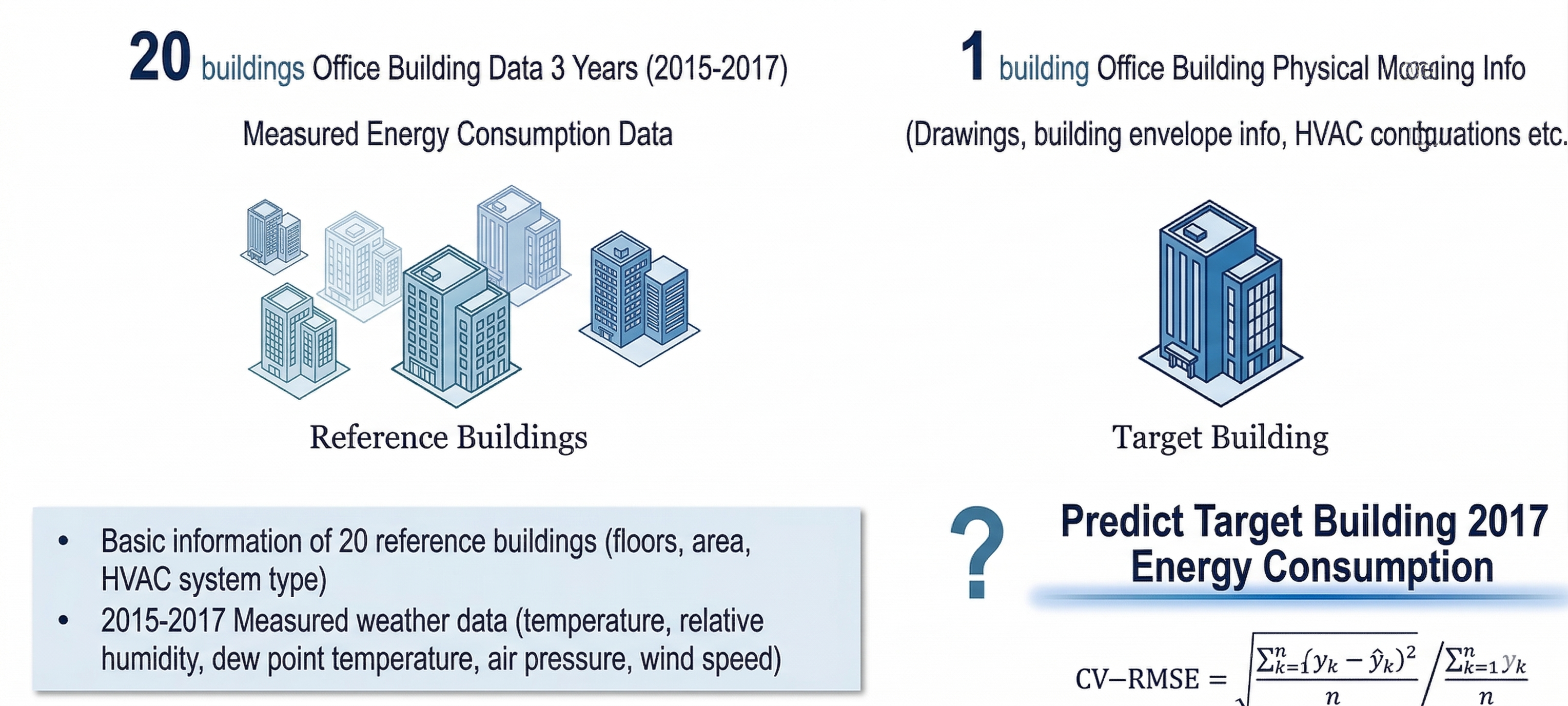

🎯 Competition Task Design

The competition focused on a limited-data forecasting setting: participants were asked to predict the 2017 annual energy consumption of a target office building. The target building had no historical energy records, only limited physical information (drawings, envelope specs, HVAC configuration). Participants could leverage:

- Measured energy data (2015–2017) from 20 reference office buildings

- Basic physical characteristics of all buildings

- Measured weather data for the same period

Evaluation metric: CV-RMSE. This design required methods capable of cross-building knowledge transfer, physics-informed feature engineering, and generalization under data scarcity.

Competition Task Illustration: Leveraging reference building data to predict a target building's performance under data scarcity.

⚙️ My Role & Leadership

Dataset & Task Design

- Processed raw meter data and physical model specs into a publishable competition dataset

- Architected the "limited-data" task structure and documentation

Infrastructure & Operations

- Built automated scoring pipeline for CV-RMSE evaluation

- Managed 140+ valid submissions and real-time leaderboard updates

- Implemented data deduplication and validation protocols

Communications & Scaling

- Managed public outreach: 5+ WeChat technical articles published

- Direct communication with 195 participants from 7 countries

- Designed all competition visual identities and awards

Coordination & Leadership

- Liaised between university, sponsors, and international experts

- Hosted and moderated the virtual Award Ceremony

- Supervised the shortlisted team report evaluation process

Research Translation

- Led the post-competition result analysis and methodological summary

- Presented findings at the National HVAC Simulation Annual Meeting

- First-authored SCI journal paper in Applied Energy (2022)

🔬 Key Findings from Result Analysis

Under fully blind conditions (no historical target data), the best submission reached a CV-RMSE of 0.67, establishing a concrete baseline for cross-building transfer in limited-data settings. (MAPE analysis pending.)

Summer AC energy prediction outperformed winter heating predictions consistently across methods, suggesting this is the more tractable sub-problem.

Latent features, particularly building characteristics not directly available in specs, were consistently underutilized, pointing to a major open research opportunity.

Despite their complexity, physics-informed approaches combined with data-driven models showed the most potential in this limited-data regime.

📎 Resources & Outputs

What I valued most about this project was not just that we ran a competition to completion — it was that we turned a scattered set of practical tasks (data cleaning, scoring algorithms, logistics, and reporting) into a coherent, research-facing workflow. For me, it was the first time I experienced how task definition, data organization, evaluation protocol, and community engagement are just as important to a research contribution as the model itself.